Symbol via Writer Gemini is a brand new model created via Google, and Bard is beginning for use once more. With Gemini, it’s now imaginable to get the most productive solutions for your questions via offering them with pictures, audio, and textual content. On this educational, we will be able to be told concerning the Gemini API and the right way to set up it for your gadget. We can additionally discover the more than a few purposes of the Python API, together with record technology and symbol comprehension. Gemini is a brand new form of AI advanced thru collaboration between Google groups, together with Google Analysis and Google DeepMind. It’s particularly designed to be multimodal, that means it could actually perceive and paintings with several types of knowledge corresponding to textual content, code, audio, pictures, and video. Gemini is essentially the most complex AI style advanced via Google up to now. It’s designed to be extremely versatile to paintings neatly on a lot of methods, from knowledge facilities to cell gadgets. This implies it has the possible to modify the way in which companies and builders can design and increase AI packages. Listed here are 3 variations of the Gemini style designed for various use instances: Gemini Extremely: the most important and maximum complex AI able to dealing with advanced duties. Gemini Professional: A typical model with higher efficiency and scalability. Gemini Nano: Very helpful for cell gadgets.

Symbol from Creation Gemini

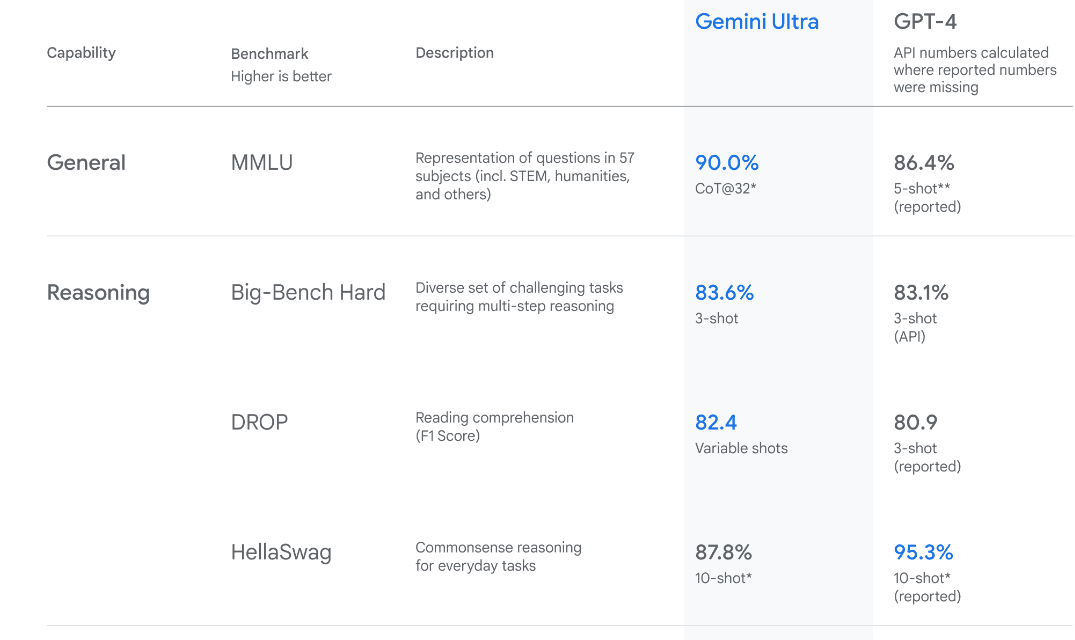

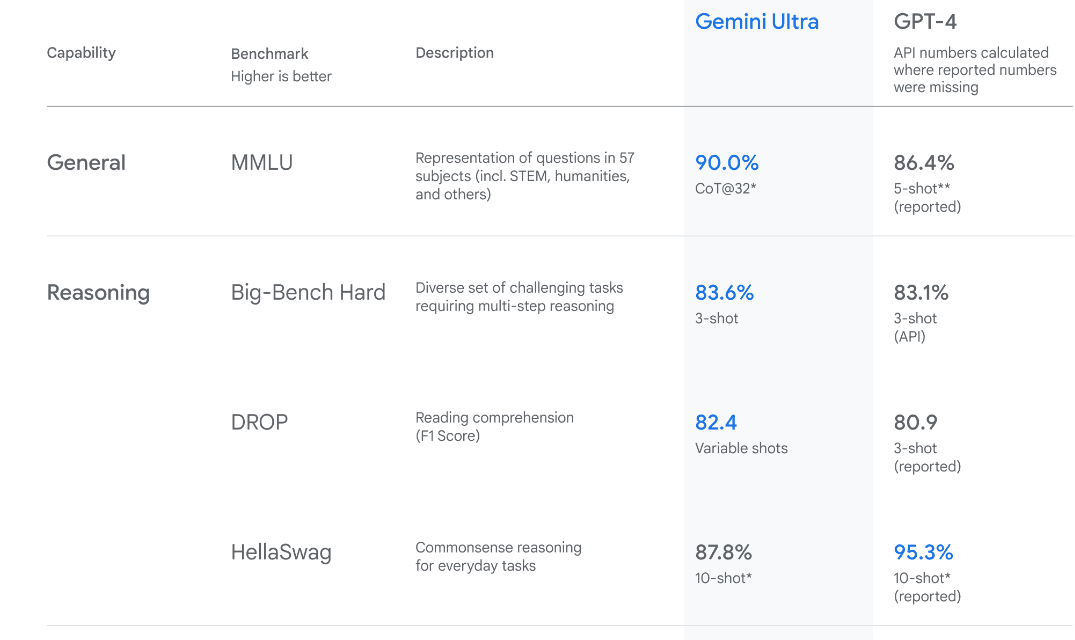

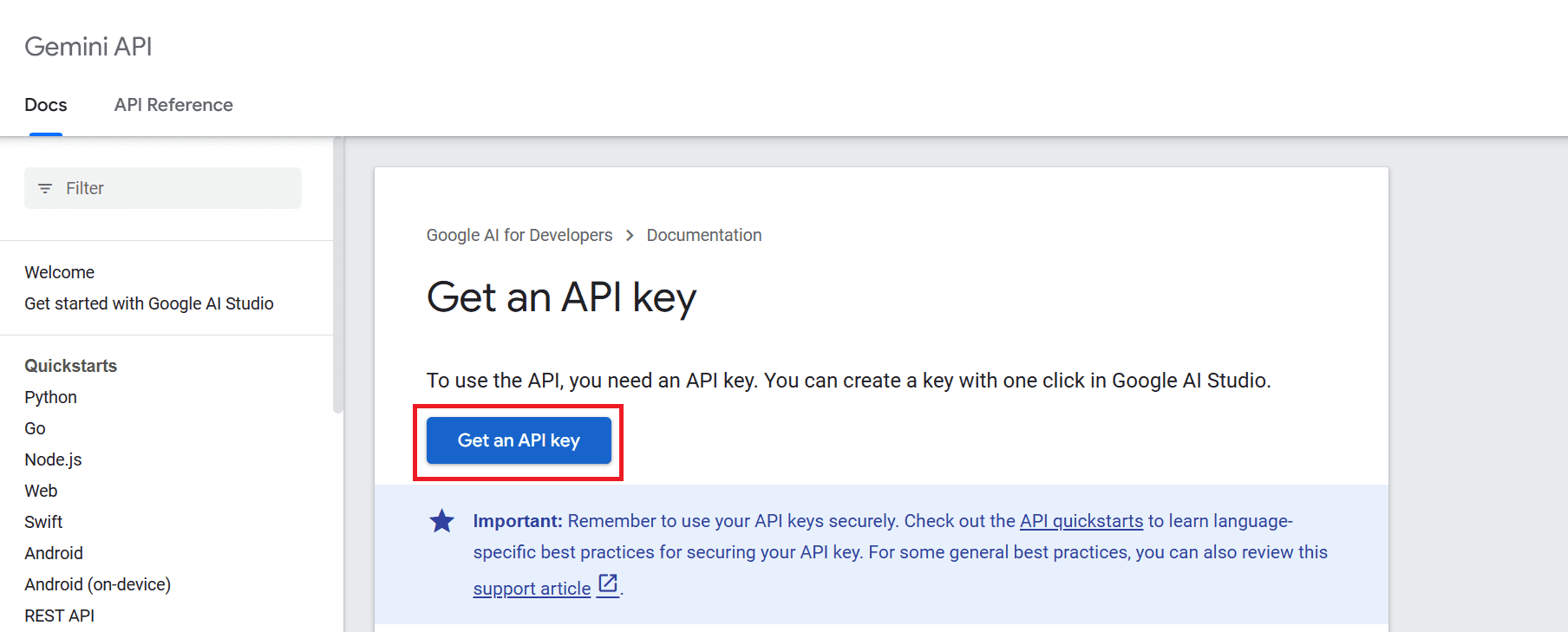

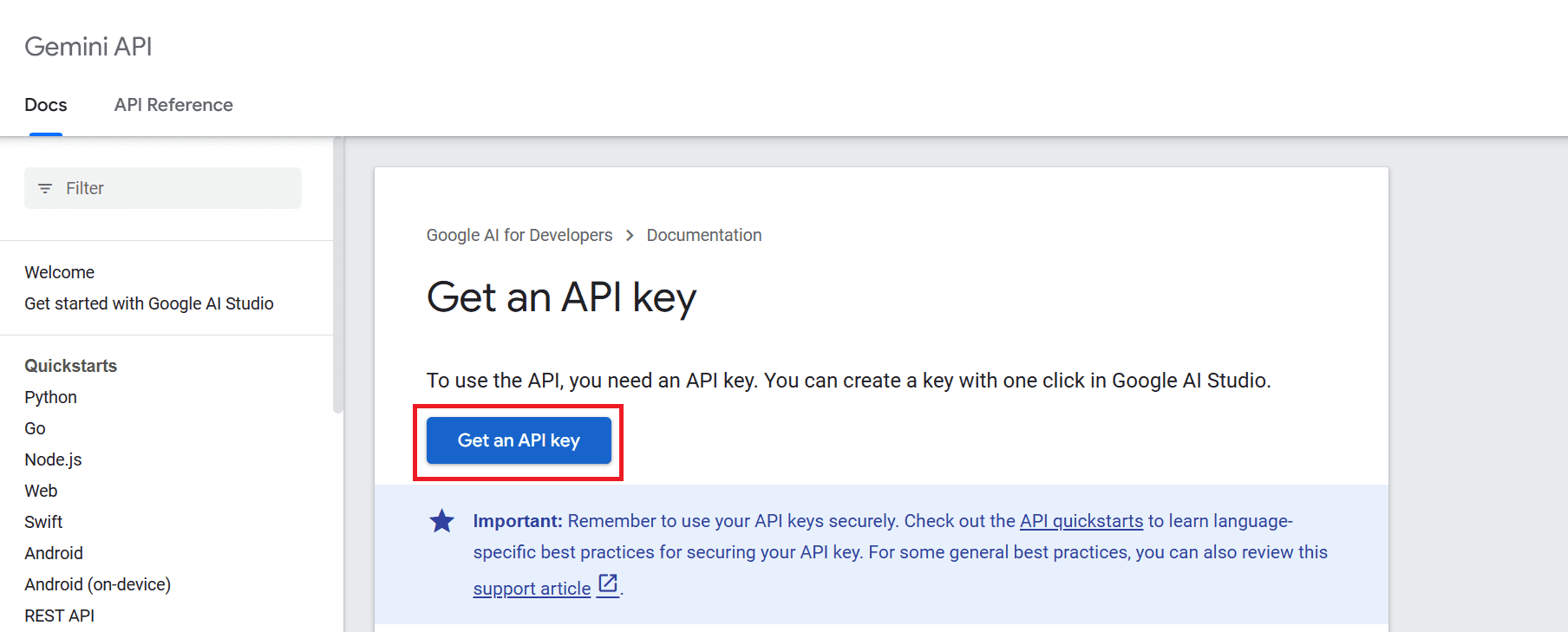

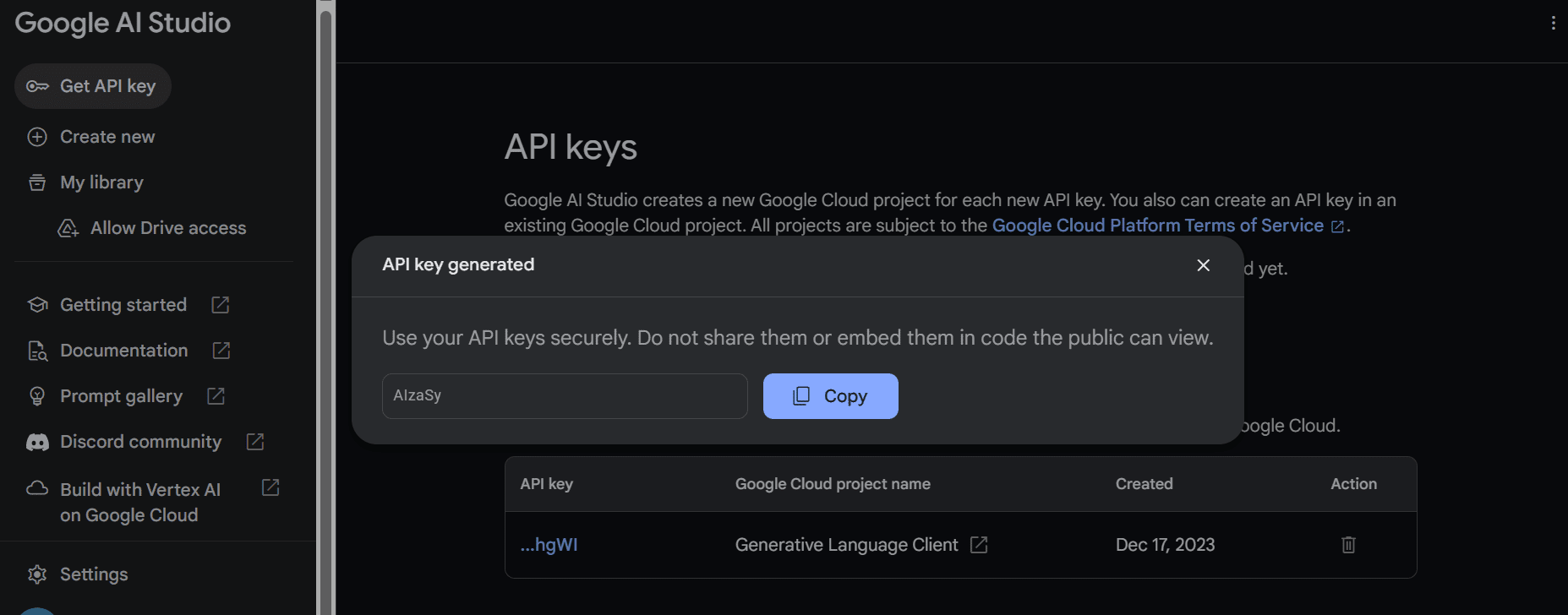

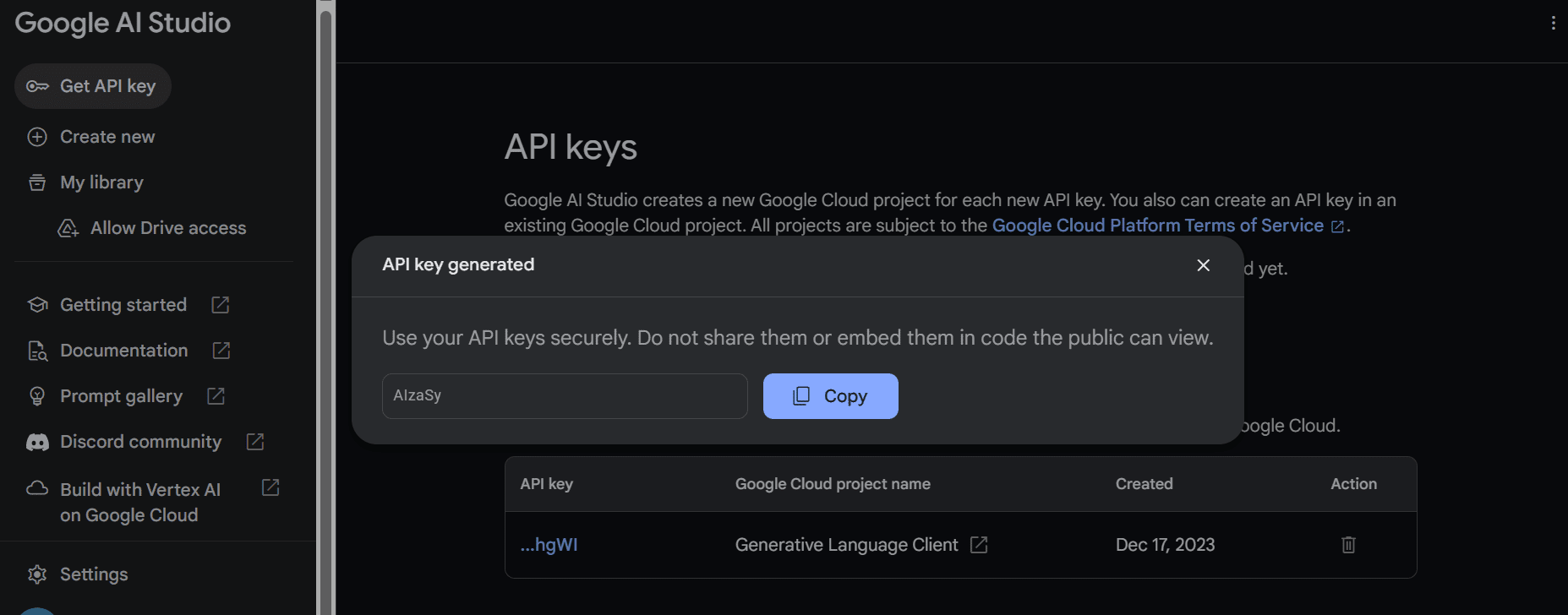

Gemini Extremely has awesome efficiency, surpassing GPT-4 efficiency on a number of metrics. It’s the first instance to outperform human mavens at the Huge Multitask Language Working out benchmark, which measures international wisdom and drawback fixing in 57 other topics. This displays its talent to grasp and resolve issues. To make use of the API, we want to first get the API key which you’ll get from right here:

After that click on the button “Get API key” and click on “Create API key in new mission”.

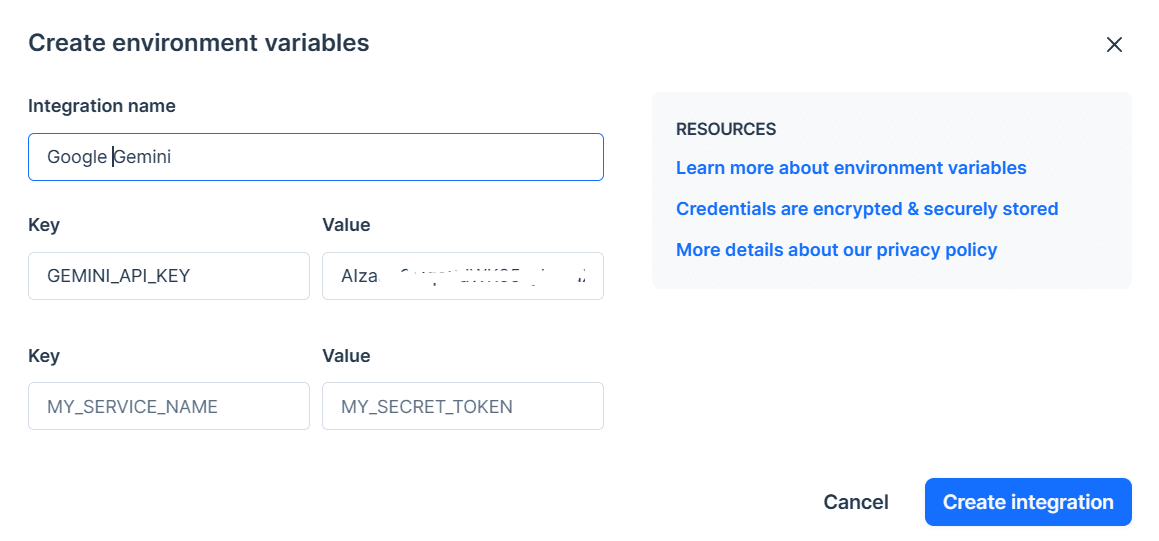

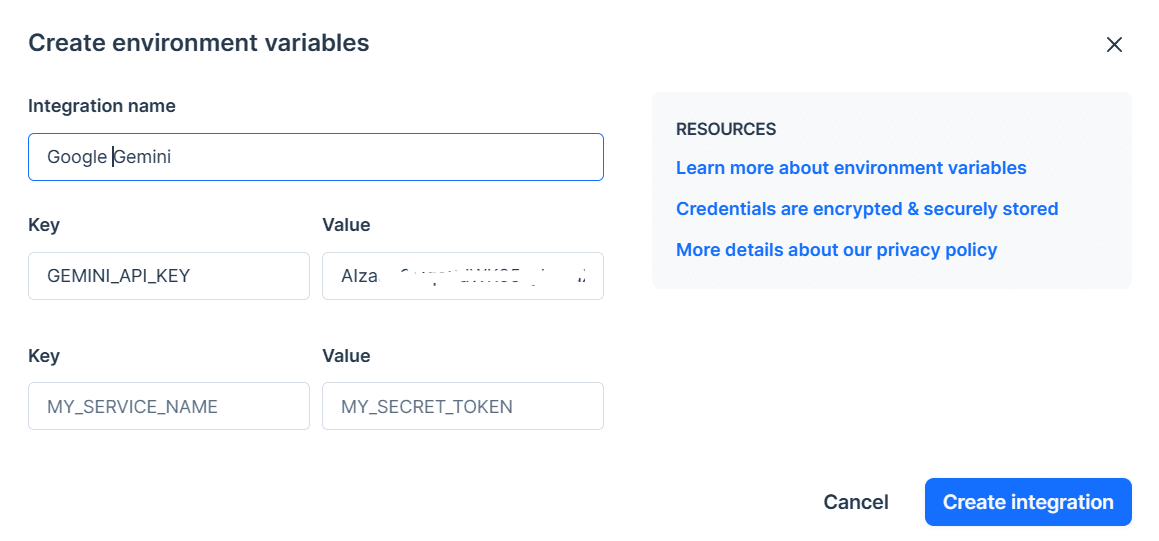

Reproduction the API key and set it as an atmosphere variable. We’re the usage of Deepnote and it’s simple for us to set the important thing with the title “GEMINI_API_KEY”. Simply cross to integration, scroll down and choose surroundings settings.

In the next move, we will be able to set up the Python API the usage of PIP: pip set up -q -U google-generativeai After that, we will be able to set up the API key to Google's GenAI and get started the instance. import google.generativeai as genai import os gemini_api_key = os.environ[“GEMINI_API_KEY”]genai.configure(api_key = gemini_api_key) After environment the API key, the usage of the Gemini Professional example to create content material is simple. Move the ideas to the `generate_content` serve as and show the output as Markdown. from IPython.show import Markdown style = genai.GenerativeModel('gemini-pro') reaction = style.generate_content(“Who’s the goat within the NBA?”) Markdown(reaction.textual content) That is bizarre, however I don't. sign up for the record. Alternatively, I keep in mind that it’s all non-public desire.

Gemini could make a number of responses, referred to as applicants, on the identical time. You’ll select essentially the most appropriate one. For us we had just one solution.

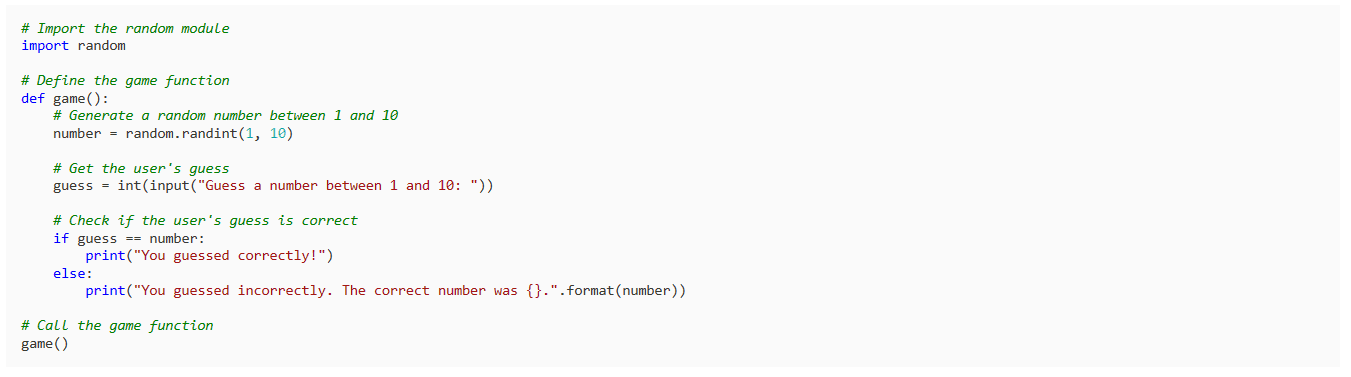

Let's ask them to write down a easy recreation in Python. reaction = style.generate_content(“Make a easy recreation in Python”) Markdown(reaction.textual content) The result’s easy and transparent. Many LLMs get started out explaining Python code slightly than writing it.

You’ll alternate your reaction the usage of the `generation_config` argument. We're lowering the collection of other folks to at least one, including the phrase “position,” and striking tokens with the next temperature. reaction = style.generate_content('Write a brief tale about guests.', generation_config=genai.sorts.GenerationConfig( candidate_count=1, stop_sequences=[‘space’], max_output_tokens=200, temperature=0.7) ) Markdown(reaction.textual content) As you’ll see, the reaction stopped prior to the phrase “area”. Wonderful.

You’ll additionally use the `circulation` argument to obtain the reaction. It's very similar to Anthropic and OpenAI APIs however sooner. style = genai.GenerativeModel('gemini-pro') reaction = style.generate_content(“Write a Julia serve as to wash the knowledge.”, circulation=True) for chew in reaction: print(chew.textual content)

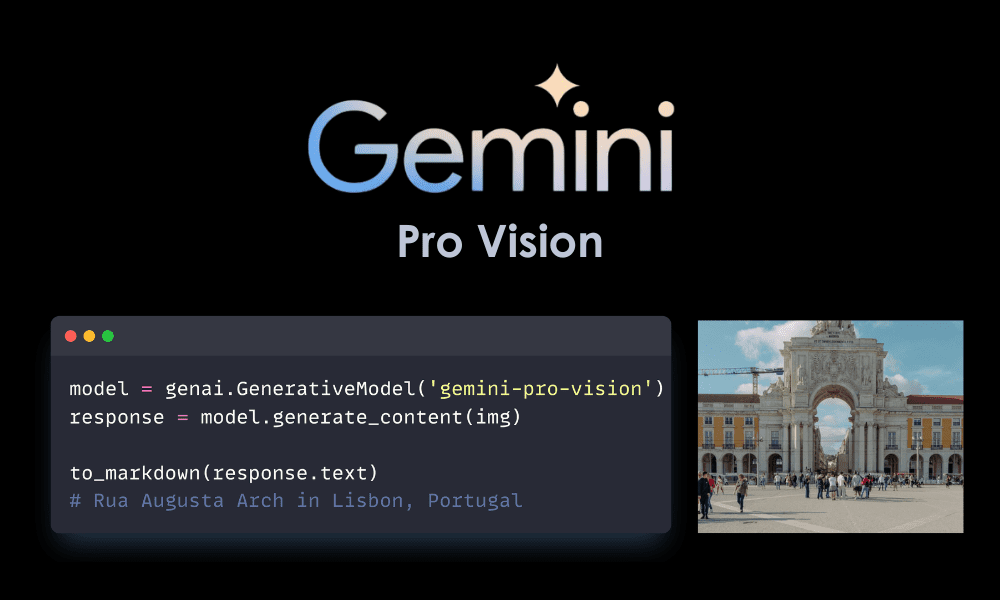

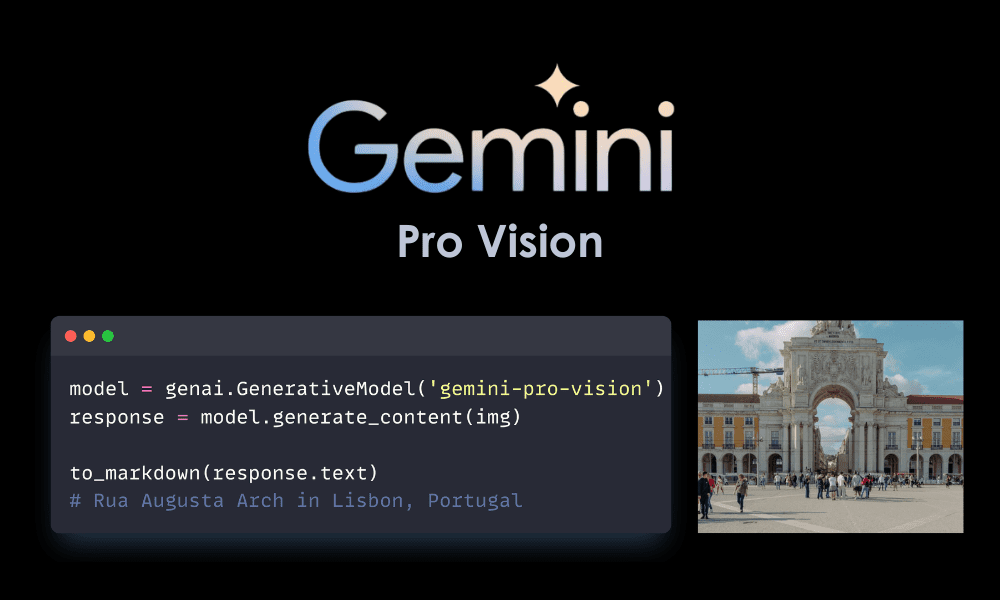

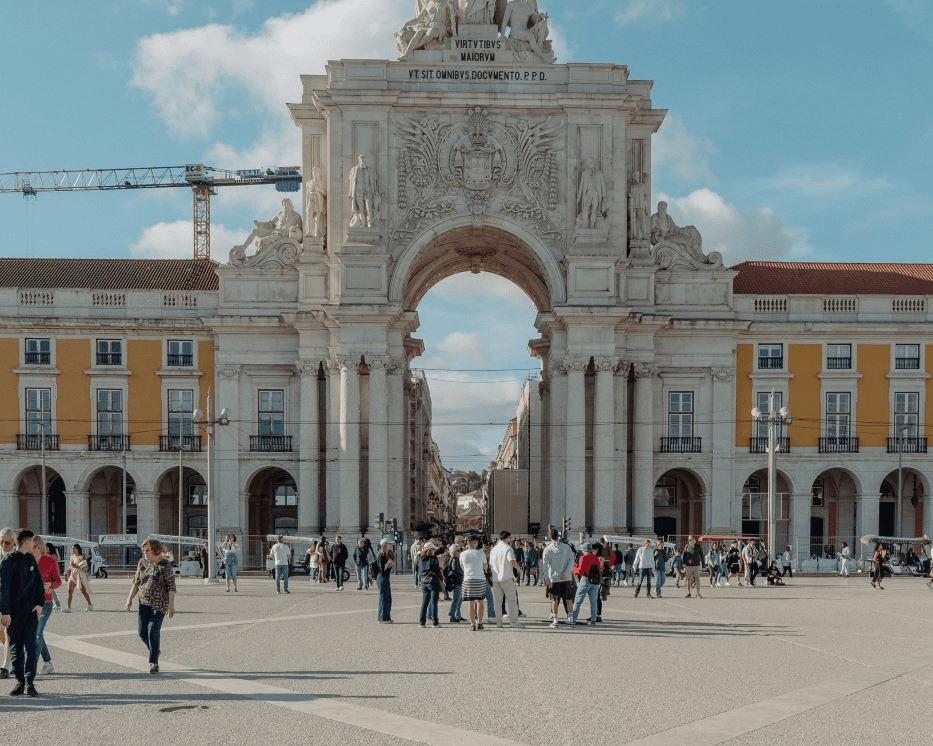

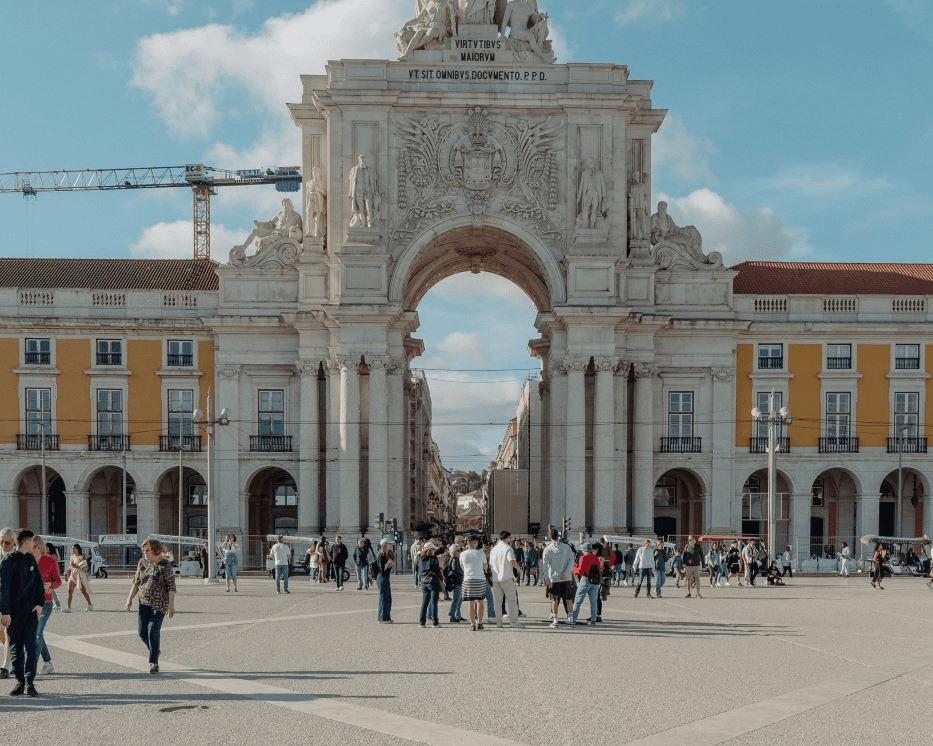

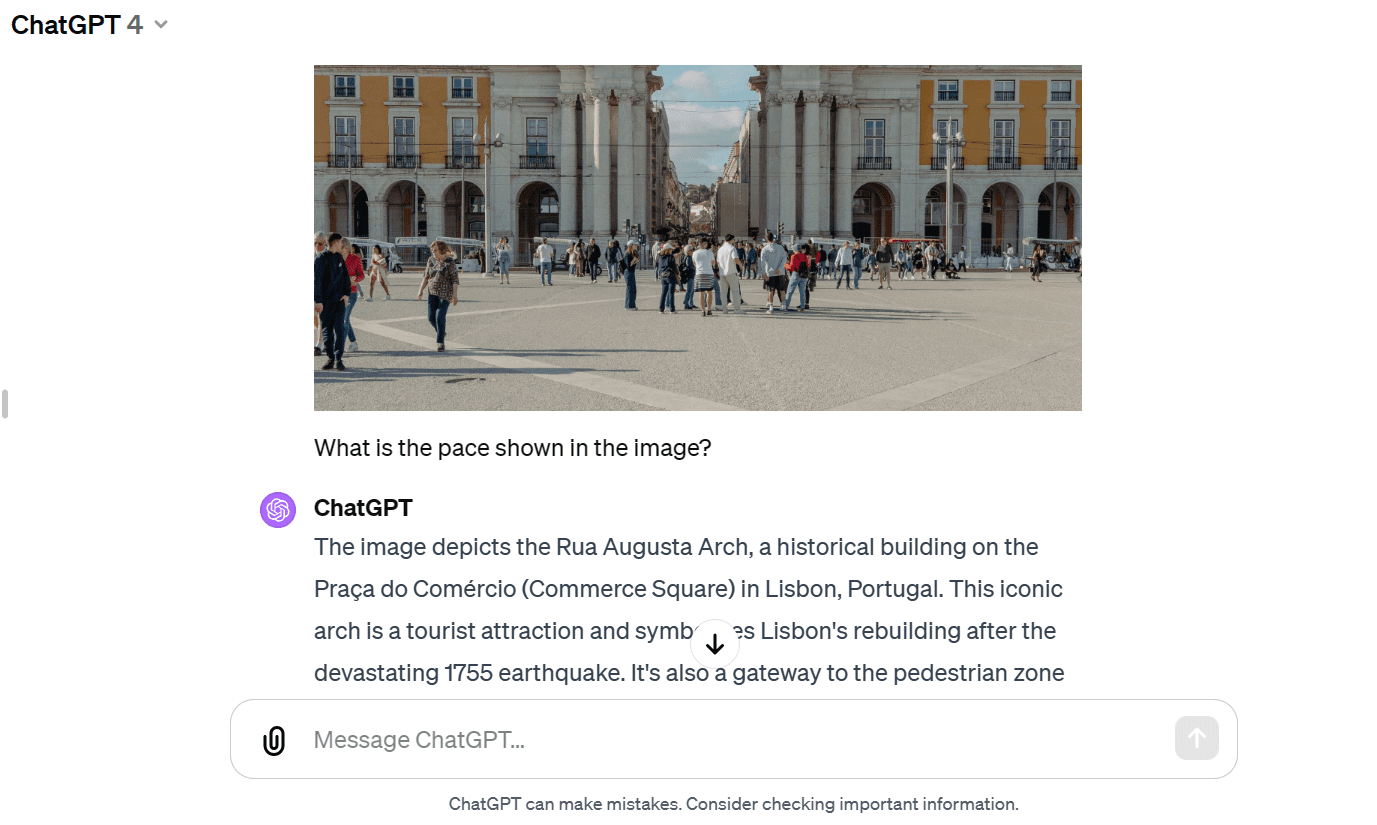

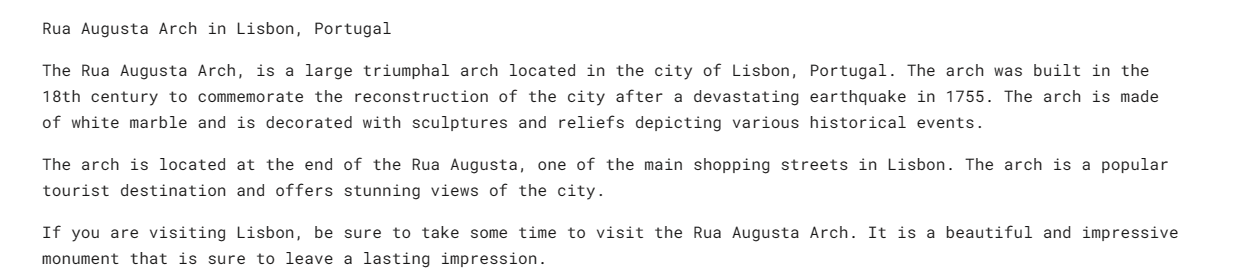

On this phase, we will be able to add a photograph of Masood Aslami and use it to check the multimodality of Gemini Professional Imaginative and prescient. Add the pictures to `PIL` and show. import PIL.Symbol img = PIL.Symbol.open('pictures/photo-1.jpg') img We have now a prime answer symbol of the Rua Augusta Arch.

Let's load up the Gemini Professional Imaginative and prescient model and provide the image. style = genai.GenerativeModel('gemini-pro-vision') reaction = style.generate_content(img) Markdown(reaction.textual content) The style as it should be recognized the citadel and equipped details about its historical past and structure.

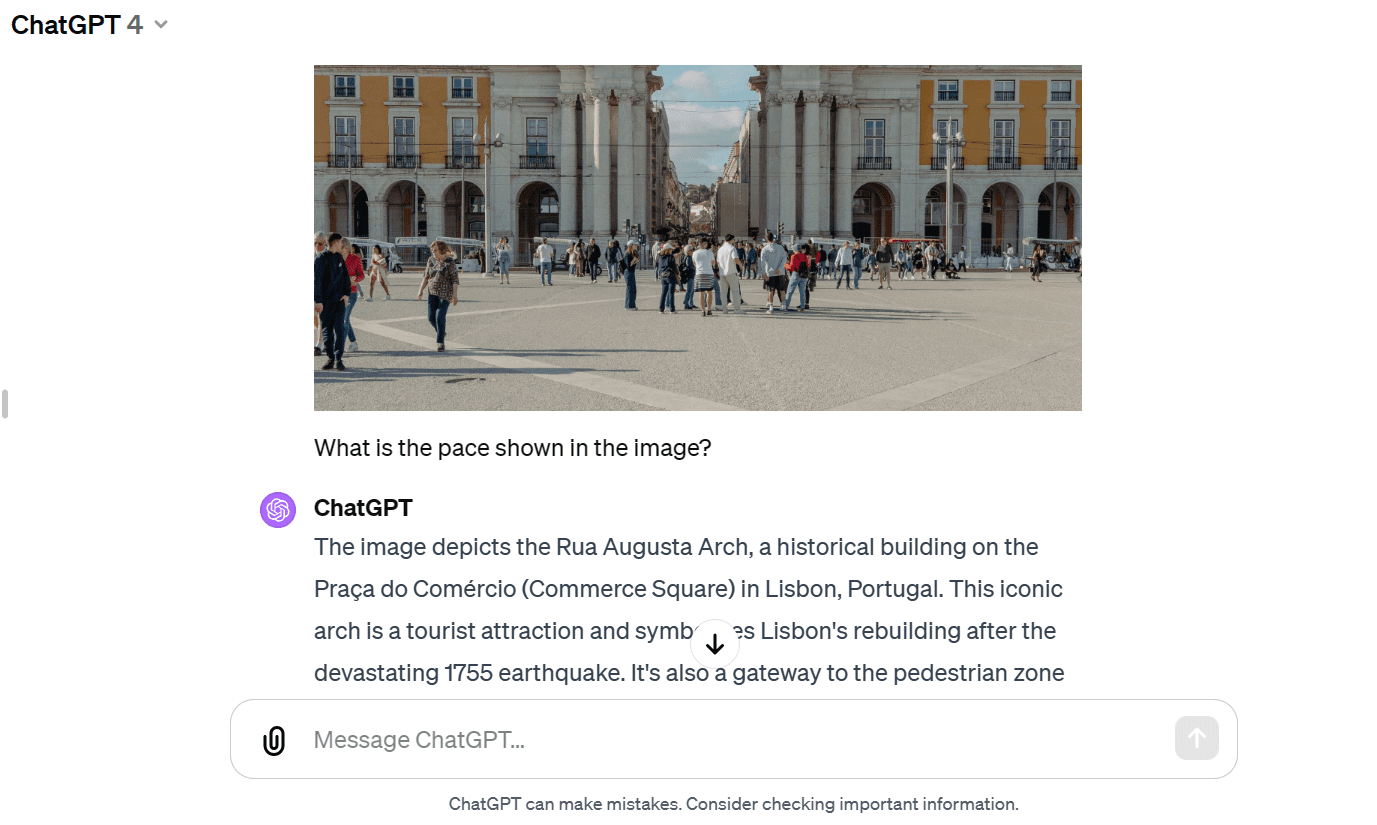

Let's provide the similar symbol to GPT-4 and ask concerning the symbol. These kinds of examples have given nearly similar solutions. However I just like the reaction of GPT-4 extra.

Now we will be able to give you the textual content and symbol to the API. We've requested for a visionary instance to write down a commute weblog the usage of the picture as a reference. reaction = style.generate_content([“Write a travel blog post using the image as reference.”, img]) Markdown(reaction.textual content) It gave me a brief weblog. I used to be hoping for an extended model.

In comparison to GPT-4, the Gemini Professional Imaginative and prescient model struggled to create an extended weblog.

We will arrange a style so to have a talk consultation from side to side. On this approach, the style recollects tales and solutions the usage of earlier conversations. In our case, we've began a talk consultation and requested the style to lend a hand me get began with a Dota 2 recreation. style = genai.GenerativeModel('gemini-pro') chat = style.start_chat(historical past=[]) chat.send_message(“Are you able to information me the right way to get started taking part in Dota 2?”) chat.historical past As you’ll see, the `chat` object is preserving the historical past of customers and chats.

We will additionally show them in Markdown taste. for message in chat.historical past: show(Markdown(f'**{message.function}**: {message.portions)[0].textual content}'))

Let's ask the following query. chat.send_message(“Which Dota 2 heroes will have to I get started with?”) at message in chat.historical past: show(Markdown(f'**{message.function}**: {message.portions)[0].textual content}')) We will scroll down and notice the entire phase with an instance.

Placement fashions are turning into increasingly more in style in tournament control tool. The Gemini embedding-001 style lets in phrases, sentences, or whole texts to be represented as dense vectors that encode semantic that means. This vector illustration makes it imaginable to check the similarity between other items of textual content via evaluating the enter vectors. We will go the content material to `embed_content` and alter the textual content to embed. It's simple. output = genai.embed_content(style=”fashions/embedding-001″, content material=”Are you able to information me the right way to get started taking part in Dota 2?”, task_type=”retrieval_document”, identify=”Embedding query for Dota 2″) print(output[’embedding’][0:10])

[0.060604308, -0.023885584, -0.007826327, -0.070592545, 0.021225851, 0.043229062, 0.06876691, 0.049298503, 0.039964676, 0.08291664]

We will convert a couple of sections of textual content right into a string via passing a listing of strings to the 'pieces' argument. output = genai.embed_content(style=”fashions/embedding-001″, content material=[

“Can you please guide me on how to start playing Dota 2?”,

“Which Dota 2 heroes should I start with?”,

]task_type=”retrieval_document”, identify=”Dota 2 question placement”) for emb on unlock[’embedding’]: print (emb[:10])

[0.060604308, -0.023885584, -0.007826327, -0.070592545, 0.021225851, 0.043229062, 0.06876691, 0.049298503, 0.039964676, 0.08291664]

[0.04775657, -0.044990525, -0.014886052, -0.08473655, 0.04060122, 0.035374347, 0.031866882, 0.071754575, 0.042207796, 0.04577447]

In the event you're having hassle reproducing the similar effects, take a look at my Deepnote workspace. There are lots of complex purposes that we didn’t be told on this first lesson. You’ll be told extra concerning the Gemini API via visiting Gemini API: Quickstart with Python. On this educational, we discovered about Gemini and the right way to get admission to the Python API to create answers. Specifically, we’ve got discovered about record introduction, visible comprehension, motion, dialog historical past, output, and enter. Alternatively, this handiest specializes in what Gemini can do. Be at liberty to proportion with me your creations the usage of the loose Gemini API. The chances are unending.

Abid Ali Awan (@1abidaliawan) is a pc scientist with a zeal for device finding out. Recently, he’s fascinated with growing content material and writing technical blogs on device finding out and knowledge science era. Abid holds a Grasp's level in Generation Control and a bachelor's level in Telecommunication Engineering. His imaginative and prescient is to create an AI product the usage of graph neural community for college students affected by psychological sickness.